Anil Sabharwal, VP of Google Photos and Communications showcased some of the ways that artificial intelligence and machine learning technologies are being used within the company and by their partners during an event attended by Ausdroid at their Sydney headquarters this morning.

Sabharwal told the audience of local media that the cookies given to us were a fun example of how artificial intelligence could create new food flavours. That story is detailed in a Google blog post, however I can advise that the cookie had an interesting taste of predominantly cardamom, salt and chocolate.

More seriously he told the audience that Australia can and should be a leader in AI, we have smart people, the necessary resources and opportunities available.

The theme of the event was emphasising how AI and machine learning could automate laborious tasks and be a multiplier of human ingenuity if more Australian businesses, government and researchers used the available tools to improve their productivity.

Sabharwal also announced that a standalone Google lens app would be available from today in the Play Store for all Android devices. A Colour Pop feature for Google Lens is also currently rolling out, Google photos will identify images that it thinks colour pop can work on, letting you apply the filter and remove colour from the background of a subject.

He also pointed out the artificial intelligence and machine learning features that Google users enjoy every day such as the automated photo video created for Mother’s Day recently, Google translate used by travellers to understand the meaning of foreign language signs and Gmail continuing improvements in guessing what you’re about to type, suggesting contextually relevant words and even whole sentences.

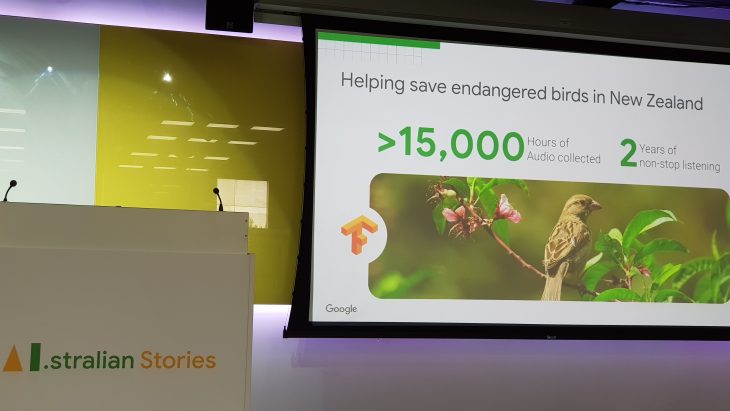

The event then moved on to showcase how researchers across Australia and the world were using artificial intelligence and machine learning tools like Tensor Flow in the fields of conservation, language and health.

Indigenous people in the Amazon rainforest are using the open source machine learning platform developed at Google Tensorflow, to detect the sound of chainsaws illegally cutting down trees.

In New Zealand tensorflow is being used to analyse thousands of hours of audio recordings made of endangered birds.

Dr. Amanda Hodgson of Murdoch University and Dr. Frederic Maire, a computer scientist at Queensland University of Technology are the lead researchers in a team that uses Tensor flow to analyse drone photography of the ocean to detect the presence of Dugongs.

Moving on to Languages, Google announced that they have been working during the past 18 months with the ARC Centre of Excellence for the Dynamics Of Language to transcribe and preserve indigenous languages.

Every Australian indigenous language is tied to a place, which is explained at the Gambay map website.

The researchers at the ARC told the audience that many thousands of hours of recordings of spoken indigenous language had been made over a long time. For this audio data to be useful it has to be transcribed by a linguist, which is a tough slow laborious process. Using their collaboration with Google they can save a lot of time and transcribe a lot more audio.

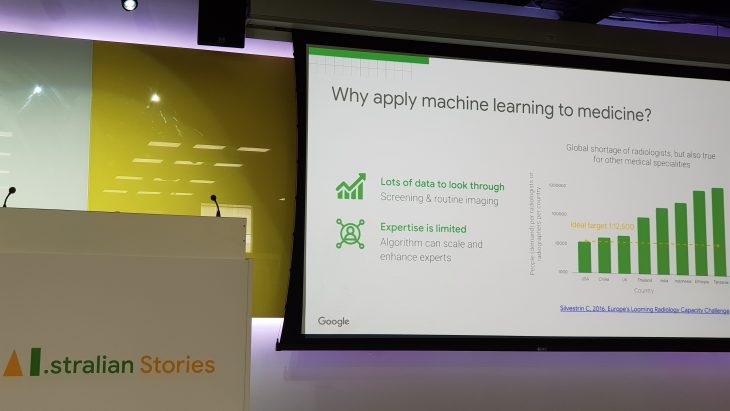

Lastly the event showcased interesting ways that artificial intelligence technologies made by Google were being used in the healthcare field.

Dr Lily Peng product manager of medical imaging at Google AI gave us an update on a project she has been working on for several years using machine learning to tackle the world wide problem of diabetic retinopathy, the fastest growing cause of blindness.

After the formal presentations were over there was time to investigate some of the demonstration areas around the room showcasing Google lens, Google Translate and a Google Cloud drawing recognition experiment, before departing via the colourful lift doors.

standalone Google Lens has been available in the Play Store for a few days now FYI Stuart https://play.google.com/store/apps/details?id=com.google.ar.lens

“Sabharwal also announced that a standalone Google lens app would be available from today in the Play Store for all Android devices”

Can’t find this? Is it available in Australia?

We can’t find it either, but he said it…

I asked Google to re-confirm what Anil said, their response was:

“Yes, that’s right. Google will be adding a Lens app to the Play Store, which will provide another option for users to quickly access the same real-time Lens experience from their home screen. This will be rolling out soon and we can let you know when its live.”